Simulating Random Variables

Lecture 23

March 20, 2026

Review

Null Hypothesis Testing and Statistical Significance

- NHST framework: ideally develop a null hypothesis \(\mathcal{H}_0\) which is an alternative to your hypothesis \(\mathcal{H}\).

- Choose test statistic \(S\) and estimage \(\hat{S}\) from your data, then obtain null distribution of \(S\) under the assumption of \(\mathcal{H}_0\).

- Estimate \(p\)-value \(\mathbb{P}\left[S \geq \hat{S} | \mathcal{H}_0 \right]\).

- If \(p\)-value is less than pre-specified significance level \(\alpha\), declare your finding “statistically significant”.

Problems With This Framework

- Null models often chosen for computational convenience, not because it’s scientifically interesting (e.g. do we care if a coefficient is simply non-zero?)

- Null models are also not necessarily unique, which impacts test statistic and distribution.

- Type I error rates can be much higher than anticipated due to multiple comparisons or improperly applied tests.

- If null is not truly complementary to the alternative, says nothing about whether the alternative is a good hypothesis.

Random Variable Simulation

Why Simulate?

- We want to see implications of a probability model.

- We want to test statistical procedures (synthetic data simulation).

- Easier than computing integrals (Monte Carlo).

- Computational efficiency (e.g. stochastic gradient descent).

Generally: Turns calculus/analytical problems into data summary problems.

Built-In Sampling Functions

R: sample, rnorm, rbinom, etc.

Julia: rand, Distributions.rand

Python: numpy.random.rand, scipy.stats.xx.rand

What Are These Functions Doing?

Think of a biased coin with probability of heads \(\theta\).

Want to obtain a Bernoulli random variable.

What can we do without using a built-in Bernoulli function?

Coin Flip Simulation

Given heads probability \(\theta\):

- Draw \(u \sim Uniform(0, 1)\).

- If \(u < \theta\), return

H. - Else return

T.

What About Discrete Variables?

How can we generalize this strategy for discrete distributions with category probabilities \(\theta_1, \theta_2, \ldots, \theta_n\)?

Discrete Variable Simulation

Given category probabilities \(\theta_1, \theta_2, \ldots, \theta_n\):

- Draw \(u \sim Uniform(0, 1)\).

- If \(u < \theta_1\), return 1.

- If \(u < \theta_1 + \theta_2\), return 2,

- \(\vdots\)

- Else return \(n\).

Generalization: Quantile Transform Method

Given \(U \sim Uniform(0, 1)\), target CDF \(F\), \(X = F^{-1}(U)\) has CDF \(F\).

Why?

\[\mathbb{P}(X \leq a) = \mathbb{P}(F^{-1}(U) \leq a) = \mathbb{P}(U \leq F(a)) = F(a)\]

In other words, if we can generate uniform variables and calculate quantiles, can generate non-uniform variables.

Problem Solved…Right?

- Often don’t have quantile functions in closed form.

- They also often don’t have nice numerical solutions.

Using the PDF

Suppose the PDF \(f\) has support on \([a, b]\) and \(f(x) \leq M\).

What could we do to sample \(X \sim f(\cdot)\)?

Rejection Sampling Algorithm

Given pdf \(f\) for \(X\), upper bound \(M\) on \(f\) in \([a, b]\):

- Simulate uniformly: \(y \sim [a, b]\), \(u \sim [0, 1]\).

- If \(Mu < f(y)\), keep \(y\) as a sample of \(X\).

- Otherwise reject.

Rejection Sampling Visualization

Figure 2: Example of rejection sampling for a Beta distribution

Rejection Sampling Efficiency

Important: Only kept 28% of proposals.

More General Rejection Sampling

Use a proposal density \(g(\cdot)\) which “covers” target \(f(\cdot)\) and is easy to sample from.

Sample from \(g\), reject based on \(f\).

General Rejection Sampling Algorithm

Suppose \(f(x) \leq M g(x)\) for some \(1 < M < \infty\).

- Simulate a proposal \(y \sim g(x)\) (e.g. by quantile method).

- Simulate \(u \sim \text{Unif}(0, 1)\)

- If \[u < \frac{f(y)}{M g(y)},\] accept \(y\); otherwise reject.

Rejection Sampling Example

This time we kept 34% of the proposals.

Rejection Sampling Efficiency

- Probability of accepting a sample is \(1/M\), so the “tighter” the proposal distribution coverage the more efficient the sampler.

- Need to be able to compute \(M\) and sample from the proposal.

Finding a good proposal and computing \(M\) may not be easy (or possible) for complex distributions!

Bimodal Rejection Sampling Example

Code

# specify target distribution

mixmod = MixtureModel(Normal, [(-1, 0.75), (1, 0.4)], [0.5, 0.5])

x = -5:0.01:5

p1 = plot(x, pdf.(mixmod, x), lw=3, color=:red, xlabel=L"$x$", ylabel="Density", label="Target")

plot!(p1, x, 2.5 * pdf.(Normal(0, 1.5), x), lw=3, color=:blue, label="Proposal (M=2.5)")

plot!(p1, size=(550, 500))

nsamp = 10_000

M = 2.5

u = rand(Uniform(0, 1), nsamp)

y = rand(Normal(0, 1.5), nsamp)

g = pdf.(Normal(0, 1.5), y)

f = pdf.(mixmod, y)

keep_samp = u .< f ./ (M * g)

p2 = histogram(y[keep_samp], normalize=:pdf, xlabel=L"$x$", ylabel="Density", label="Kept Samples", legend=:topleft)

plot!(p2, x, pdf.(mixmod, x), linewidth=3, color=:black, label="True Target")

density!(y[keep_samp], label="Sampled Density", color=:red)

plot!(p2, size=(550, 500))

display(p1)

display(p2)Random Number Generators

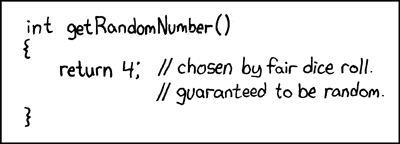

Where Do “Random” Samples Come From?

There’s no such thing as a truly random number generator!

Need to generate samples in a deterministic way that have random-like properties.

Source: XKCD 221

Pseudorandom Number Generators

We want:

- Number of \(U_i \in [a, b] \subset [0, 1]\) is \(\propto b-a\)

- No correlation between successive \(U_i\).

- No detectable dependencies in longer series.

There are several of these implemented in modern programming languages: typical default is the Mersenne Twister.

Example: Rotations

\[U_{t+1} = U_t + \alpha \mod 1\]

- If \(\alpha \neq k/n\) is irrational, this never repeats (no \(m\) such that \(m\alpha = 1\).)

- If \(\alpha = k/n\) is rational, this repeats, but with a long period for large \(n\).

(P)RNGs and Chaos

We can get similar dynamics from area-preserving chaotic dynamical systems:

- Long periods with dense orbits (well-mixing);

- Area-perserving (uniformly distributed);

- Rapid divergence of nearby points (sensitivity to initial conditions);

Example: Arnold Cat Map

\[ \begin{align*} \phi_{t+1} &= 2U_t + \phi_t \mod 1 \\ U_{t+1} &= \phi_t + U_t \mod 1 \end{align*} \]

Report only \((U_t)\): get hard to predict uniformly distributed data.

Source: Wikipedia

Cat Map vs Mersenne Twister

Code

function cat_map(N)

U = zeros(N)

ϕ = zeros(N)

U[1], ϕ[1] = rand(Uniform(0, 1), 2)

for i = 2:N

ϕ[i] = rem(U[i-1] + 2 * ϕ[i-1], 1.0)

U[i] = rem(U[i-1] + ϕ[i-1], 1.0)

end

return U

end

N = 1_000

Z = cat_map(N)

U = rand(MersenneTwister(1), N) # Mersenne Twister is not the default in Julia, but is in other languages, so using it here by explicitly setting it as the generator

p1 = scatter(Z[1:end-1], Z[2:end], xlabel=L"$U_t$", ylabel=L"$U_{t+1}$", title="Cat Map", label=false, size=(500, 500))

p2 = scatter(U[1:end-1], U[2:end], xlabel=L"$U_t$", ylabel=L"$U_{t+1}$", title="Mersenne Twister", label=false, size=(500, 500))

display(p1)

display(p2)Random Seeds

Pseudo-random numbers are deterministic, so can be repeated. The sequence depends on a seed.

- If you don’t set a seed explicitly, the computer will choose one (usually based on the date/time stamp when you execute the script or program).

- Setting a seed resets the sequence.

Set seeds to ensure reproducibility.

But…Be Careful With Seeds

- Possible for a statistical procedure to appear to work with just one seed; test across several.

- Seeds may match, but RNG algorithm may be different across different languages/versions.

Key Points and Upcoming Schedule

Simulating Random Variates

- Can generate uniform distributions using pseudorandom number generators (unstable dynamical systems).

- Transform into other distributions using:

- Quantile method;

- Rejection method.

- Default functions work well; only really have to worry about any of this when sampling from “non-nice” distributions

Upcoming Schedule

Next Classes

Next Week: Monte Carlo Simulation