Uncertainty Quantification

Lecture 27

April 6, 2026

Review

Monte Carlo Simulation

- Monte Carlo Principle: Approximate \[\mathbb{E}_f[h(X)] = \int h(x) f(x) dx \approx \frac{1}{n} \sum_{i=1}^n h(X_i)\]

- Monte Carlo is an unbiased estimator, but beware (and always report) the standard error \(\sigma_n = \sigma_Y / \sqrt{n}\) or confidence intervals.

- More advanced methods can reduce variance, but may be difficult to implement in practice.

Uses of Monte Carlo

- Estimate expectations / quantiles;

- Calculate deterministic quantities (framed as stochastic expectations);

- Optimization (problem 3 on HW5).

Sampling Distributions

So Far…

We’re 12 weeks in and haven’t said anything about how to quantify uncertainties in model estimation.

Let’s talk about that.

Implications of Sampling Variability

- The data is one realization of a stochastic process.

- Estimates of statistical quantities (parameters, test statistics, etc) depend on the data.

- If we were to “re-run the tape”, we would get different data, and therefore different estimates.

How To Quantify Sampling Variability

- Standard errors: how much would we expect this quantity to vary from one data replication to another?

- Confidence intervals: what are the parameters that might have been expected to produce this data with some pre-assigned probability?

But for both of these, we need to know the sampling distribution: the underlying distribution of estimates across different data replications.

Sampling Distributions

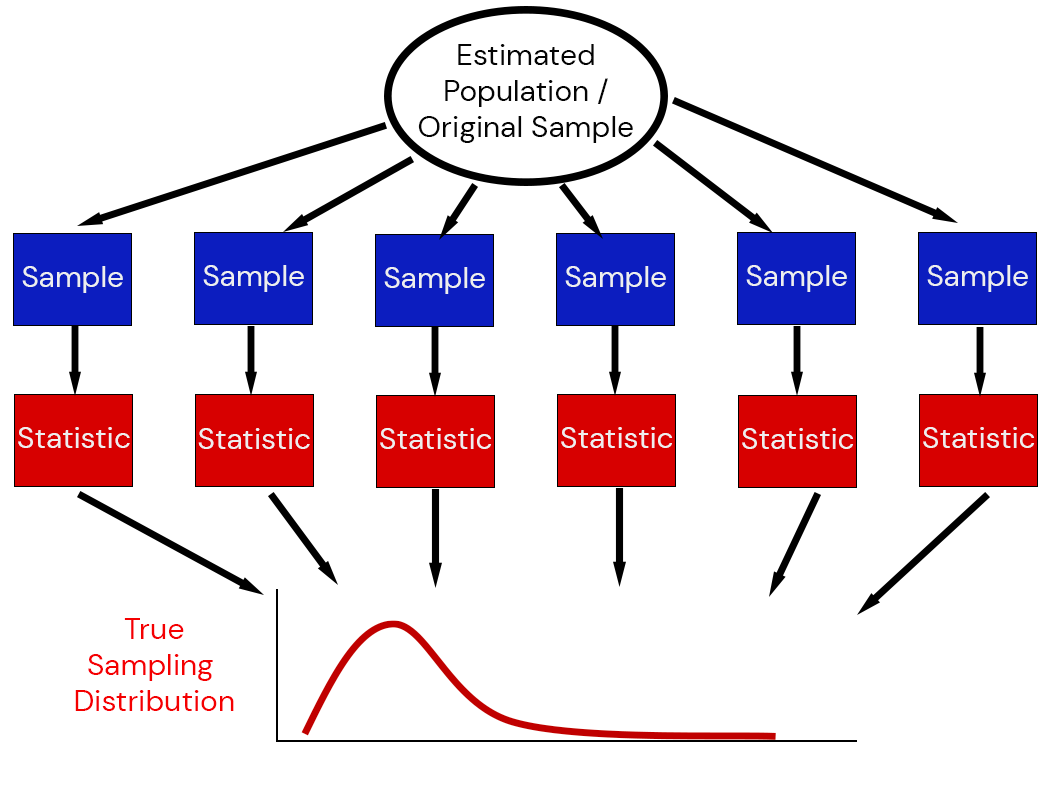

The sampling distribution of a statistic captures the uncertainty associated with random samples.

Estimating Sampling Distributions

- Special Cases: can derive closed-form representations of sampling distributions (think statistical tests)

- Asymptotics: Central Limit Theorem or Fisher Information

Special Case: Linear Regression

Theory around linear regression: if data is truly generated by some linear model (or something reasonable close), then

\[\frac{\hat{\beta} - \beta}{\hat{\text{se}}\left[\hat{\beta}\right]} \sim t_{n-2}\]

This means that even if the model is correctly specified, the standardized estimates of a coefficient \(\beta\) can be expected to follow a \(t\) distribution based on sampling variability.

Fisher Information

Fisher Information: \[\mathcal{I}_x(\theta) = -\mathbb{E}\left[\frac{\partial^2}{\partial \theta_i \partial \theta_j} \log \mathcal{L}(\theta | x)\right]\]

Observed Fisher Information (uses observed data and calculated at the MLE): \(\mathcal{I}_\tilde{x}(\hat{\theta})\)

Fisher Information and Standard Errors

Asymptotic result: \[\sqrt{n}(\theta_\text{MLE} - \theta^*) \to N(0, \left(\mathcal{I}_x(\hat{\theta^*})\right)^{-1}\]

Sampling distribution based on observed data: \[\theta \sim N\left(\hat{\theta}_\text{MLE}, \left(n\mathcal{I}_\tilde{x}(\theta_\text{MLE})\right)^{-1}\right)\]

Estimating Fisher Information

- Can be done with automatic differentiation or (sometimes hard) calculations;

- May be singular (no inverse ; undefined standard errors) for complex models;

- May not be a good approximation of the variance for finite samples!

Next Classes

Wednesday: The Nonparametric Bootstrap

Friday: The Parametric Bootstrap

Assessments

Homework 5: Due next Friday (4/17)

Project Updates: Due Friday (4/10)

Quiz 3: Friday (4/10) through pre-break material on Monte Carlo.